CONVERSATIONAL AI PATTERNS

A sidebar that remembers so the analyst doesn't have to

A conversational AI has perfect recall of every past chat — the interface's job is to make that memory usable, not hide it behind truncated sentences. Each conversation gets an auto-generated title that names what it was about. Timestamps replace date-math. Grouping by time window means past work is scannable by structure, not row-by-row. The AI's memory becomes a surface the analyst navigates, not one they reconstruct.

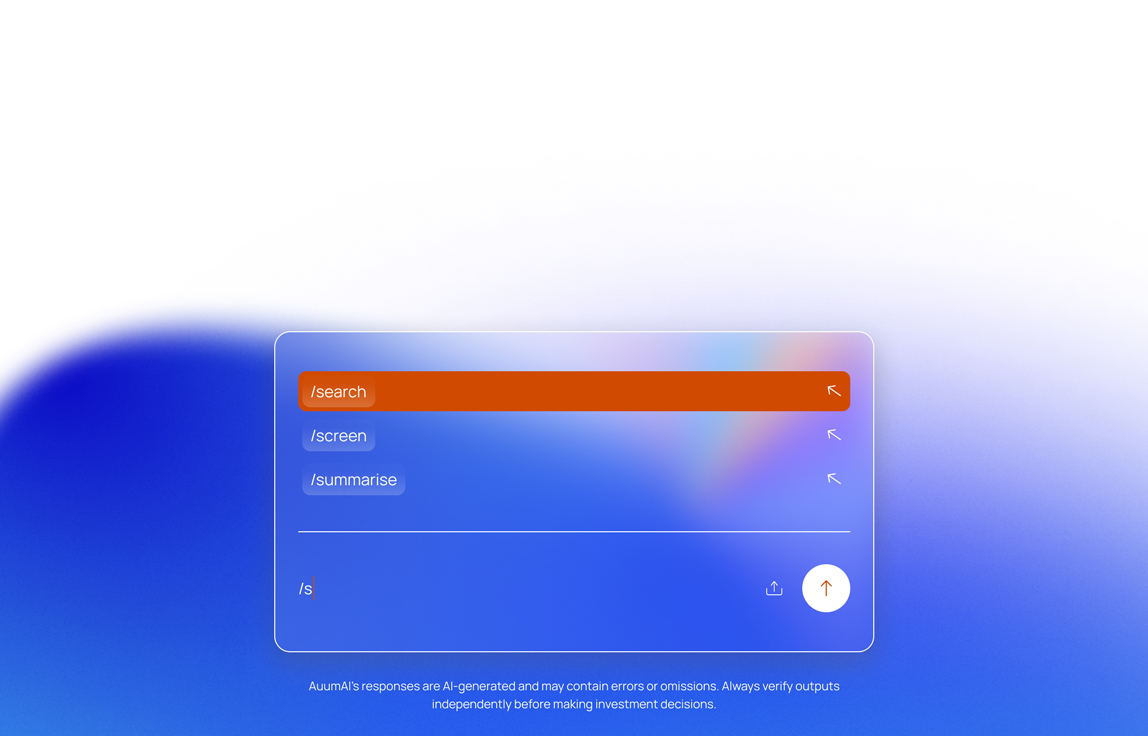

CONVERSATIONAL AI PATTERNS

The / command for users who can't afford to be misunderstood

Free-text prompts are fine for casual use. For LP analysts reviewing a Fund IV, "compare X and Y" has to mean exactly that. The

/ pattern turns the action into a typed object the user chooses deliberately — /compare, /summarise, /draft-memo — so the AI isn't parsing natural language for intent it could misread.

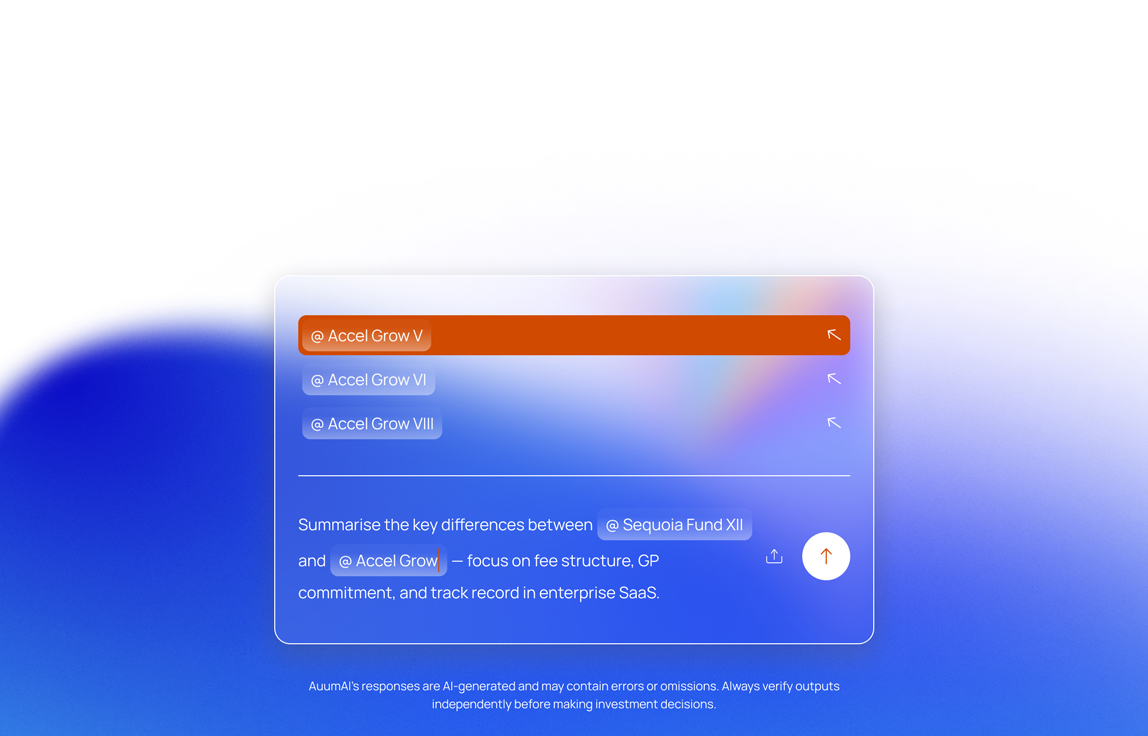

CONVERSATIONAL AI PATTERNS

The @ references for people who can't afford ambiguity

Fund names overlap. Documents drift across versions. "Our EM portfolio" could mean three different things. Natural-language prompts ask the AI to resolve that ambiguity and hope it guessed right.

@ removes the guess — each reference binds to a specific record at composition time, so the AI inherits precision it would never generate on its own.

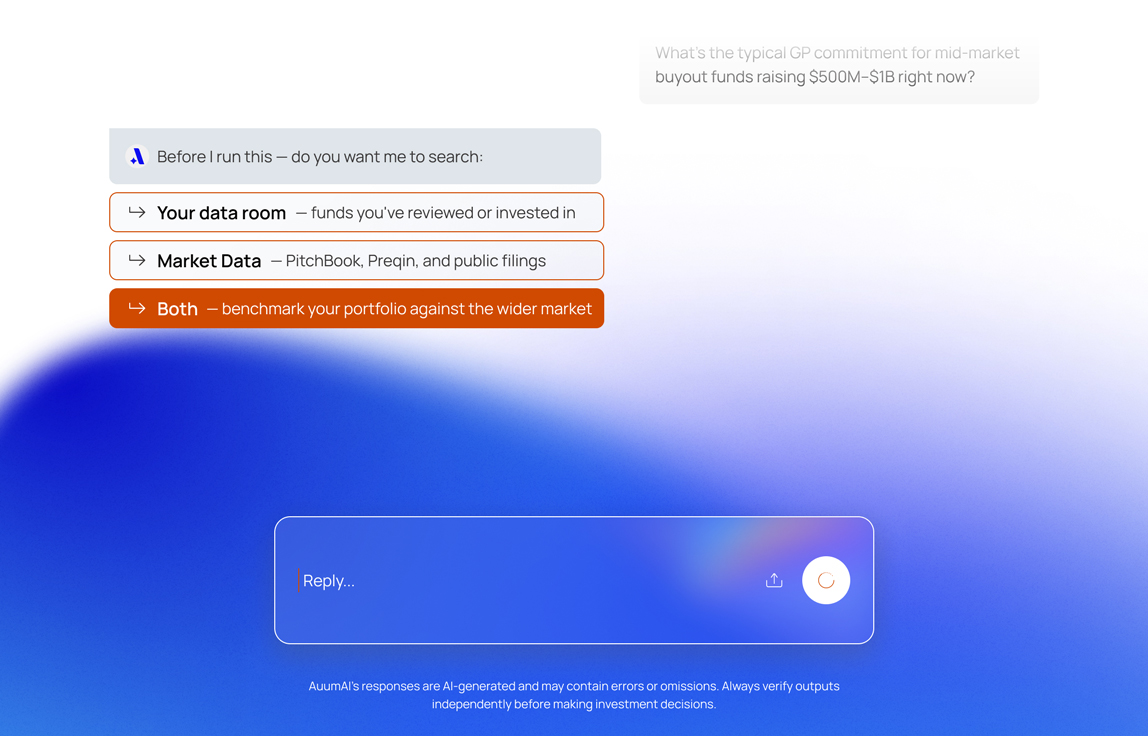

CONVERSATIONAL AI PATTERNS

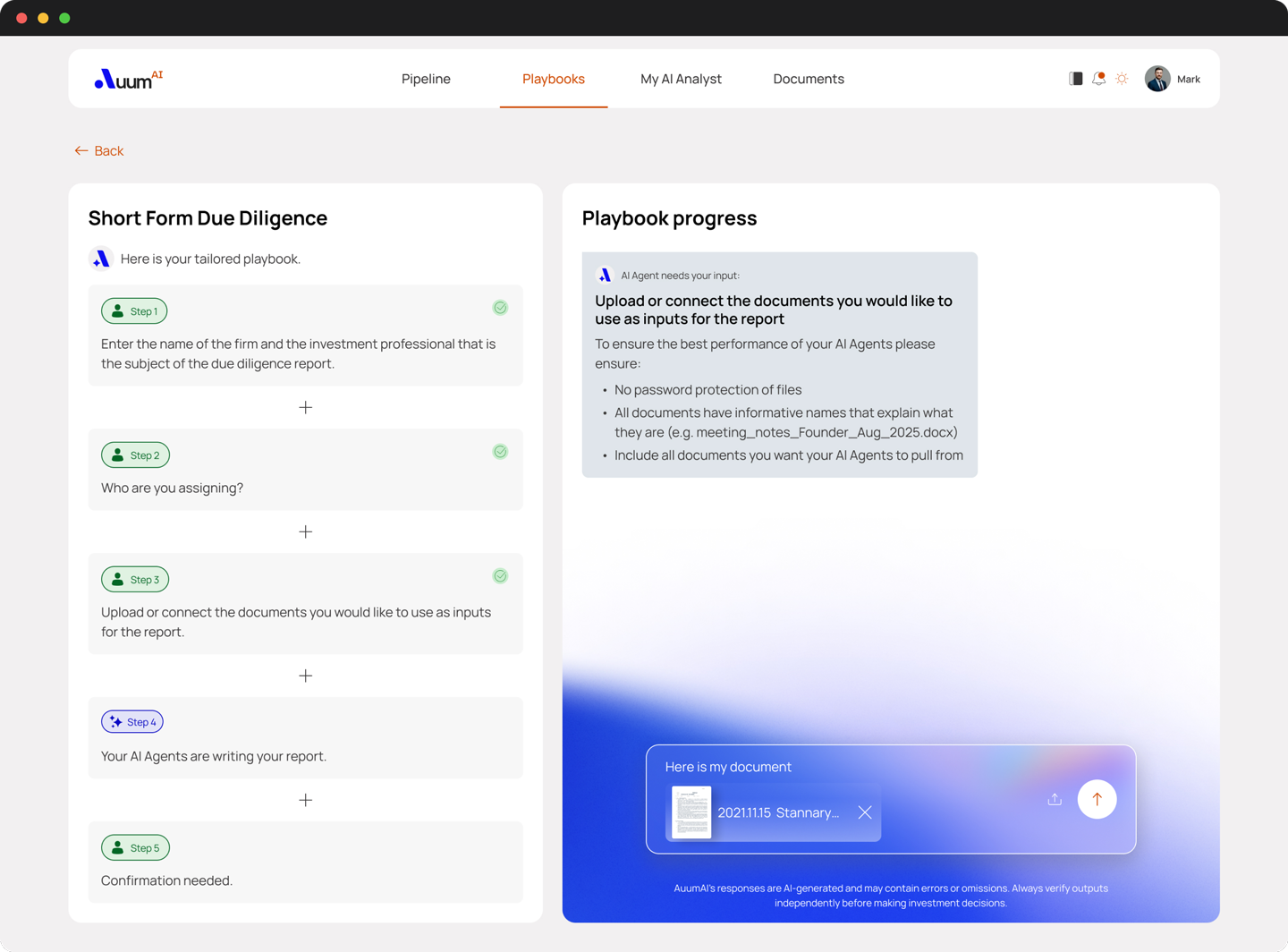

Human-in-the-loop checkpoint

Silent assumptions are the hardest kind of error to catch — the answer looks confident and plausible and is quietly wrong. So AuumAI never assumes. At every branch, the options surface with the usual choice pre-selected. One click to confirm, one click to override.

CONVERSATIONAL AI PATTERNS

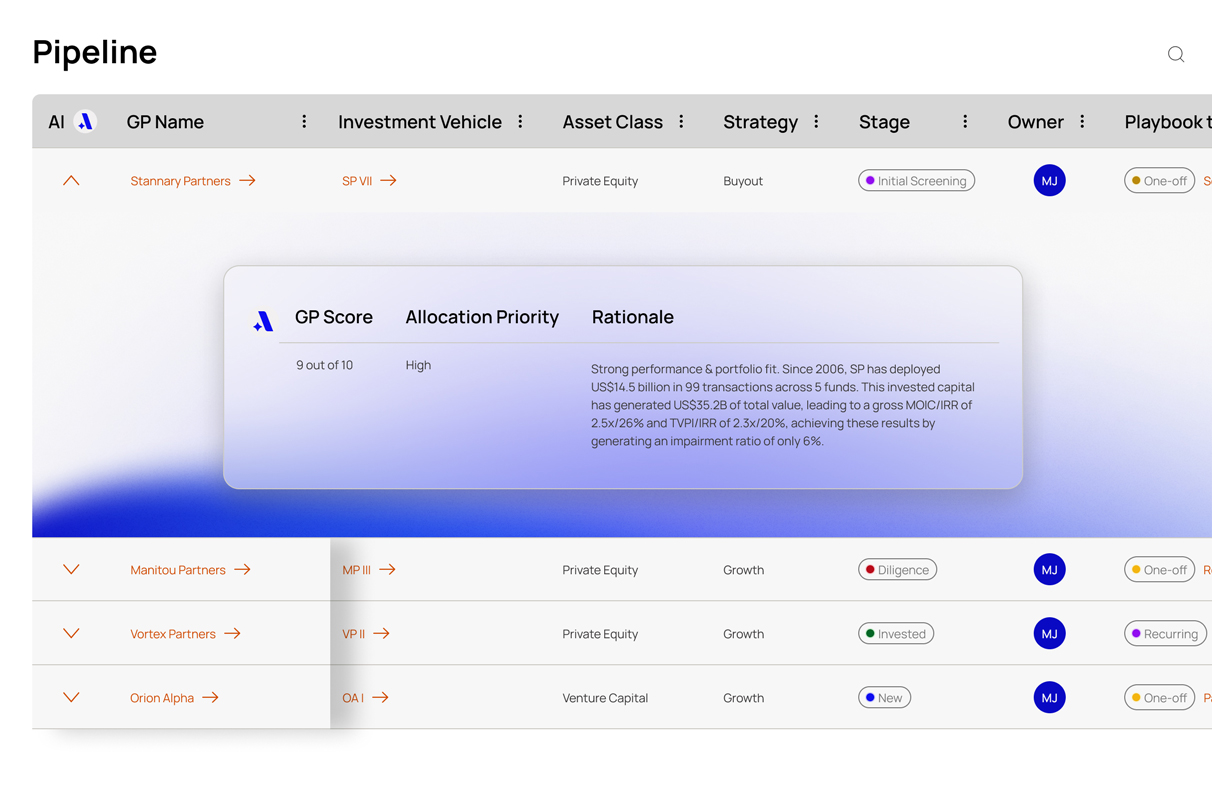

Provenance chain

Every number in an AuumAI response is bound to the document it came from — GP Score, rationale, paragraph, retrieval timestamp. Click a figure, open the proof. Compliance teams don't ask where the 18% IRR came from; they click on it.